Automated backup of database

In this section, we provide instructions how to run pg_dump utility

and transfer the database dump file to S3 bucket automatically by schedule.

Create a user account backup with specific privileges

Since this is an automated script, the best practice is to create a user with just the permissions needed that can be used to execute the script.

Add user backup

sudo useradd backup

Give backup privileges to run pg_dump as user postgres

Modify the sudoers file

sudo visudo

backup ALL=(postgres) NOPASSWD:/usr/bin/bash,/usr/bin/pg_dump

Verification

You should be able to assume user backup, verify the aws version and

execute pg_dump as user postgres. Any other commands will prompt for a

password and fail to execute with Permission denied.

[centos@miarecdb ~]$ sudo -iu backup

...

[backup@miarecdb ~]$ /usr/local/bin/aws --version

aws-cli/2.7.25 Python/3.9.11 Linux/3.10.0-1160.el7.x86_64 exe/x86_64.centos.7 prompt/off

...

[backup@miarecdb ~]$ sudo -Hiu postgres pg_dump --version

pg_dump (PostgreSQL) 12.12

Create Bash Script

Create secret file

This file will only be accessible by the backup user and super users, it will contain credentials generated in the above step.

sudo -u backup vi /home/backup/.backup_secret

Insert the following, and be sure to change the information for your deployment.

FILEPREFIX=<backup_prefix>

BUCKETNAME=<S3_BUCKET_NAME>

AWS_ACCESS_KEY_ID=<AWS_ACCESS_KEY_ID>

AWS_SECRET_ACCESS_KEY=<AWS_SECRET_ACCESS_KEY>

Where:

-

FILEPREFIX, file name prefix that will be used to name all backup files in AWS S3 storage, this should be unique to each instance, suggestion is to include\$HOSTNAMEvar -

BUCKETNAME, name of s3 bucket where database backup will be stored -

AWS_ACCESS_KEY_ID, AWS Secret Key ID generated earlier -

AWS_SECRET_ACCESS_KEY, AWS Secret Access Key generated earlier

Verify

[centos@miarecdb ~]$ sudo -u backup cat /home/backup/.backup_secret

FILEPREFIX=miarecdb-$HOSTNAME

BUCKETNAME=miarec-db-backup

AWS_ACCESS_KEY_ID=....

AWS_SECRET_ACCESS_KEY=....

[centos@miarecdb ~]$

Create a backup script

sudo vi /usr/local/bin/miarec_backup.sh

Insert the following:

#!/bin/bash

# Read Variables from secret file

set -o allexport

source ~/.backup_secret

set +o allexport

BACKUPDIR=/tmp

TMPFILE=miarecdb.backup

DATE=$(date "+%Y.%m.%d-%H.%M.%S")

echoerr() { echo "$@" 1>&2; }

# Generate DB dump

backup_db (){

echo "Dumping database to $BACKDIR/$TMPFILE"

sudo -Hiu postgres pg_dump -F c -f $BACKDIR/$TMPFILE miarecdb

if [ $? -eq 0 ]

then

echo "The database dump was successful!"

else

echoerr "There was a problem with the database dump, stopping"

exit 1

fi

}

relocate_s3 (){

echo "Moving files to S3"

/usr/local/bin/aws s3 cp $BACKUPDIR/$TMPFILE s3://$BUCKETNAME/$FILEPREFIX-$DATE.backup

if [ $? -eq 0 ]

then

echo "Backup was successfully transferred to AWS S3 $BACKDIR/$TMPFILE"

else

echoerr "There was a problem with the transfer to S3, stopping"

exit 1

fi

}

backup_db

relocate_s3

echo "Completed in ${SECONDS}s"

Make the script executable

sudo chmod u+x /usr/local/bin/miarec_backup.sh

Change ownership to backup user

sudo chown backup:backup /usr/local/bin/miarec_backup.sh

Result

[centos@miarecdb ~]$ ls -l /usr/local/bin/

total 652

...

-rwxr--r--. 1 backup backup 902 Aug 24 17:32 miarec_backup.sh

Verify

Manually call script on behalf of user backup

[centos@miarecdb ~]$ sudo -iu backup /usr/local/bin/miarec_backup.sh

Dumping Database

The database dump was successful

Moving files to S3

upload: ../../tmp/miarecdb.backup to s3://miarec-db-backup/miarecdb-miarecdb.example.com-2022.08.24-17.36.40.backup

Backup was transferred to AWS S3

Completed in 2s

Create a crontab job to execute the backup script

sudo crontab -u backup -e

An editor will be started (vi by default. The file being edited will have one job per line. Empty lines are allowed, and comments start their line with a hash symbol (#).)

Insert the following

0 1 * * * /usr/local/bin/miarec_backup.sh

Let's break down those options:

-

0 1 * * *cron expression determines when the job will run, which is 1:00am every day. Help with creating Cron expressions can be found here https://crontab.guru/ -

/usr/local/bin/miarec_backup.shlocation of script

Verification

Display cron jobs

[centos@miarecdb ~]$ sudo crontab -u backup -l

0 1 * * * /usr/local/bin/miarec_backup.sh

An archive will be produced at the specified path every night at 1:00am.

[centos@marecdb ~]$ ls -l /tmp

-rw-rw-r--. 1 postgres postgres 1102336 Aug 2 16:35 miarecdb.backup

[centos@miarecdb ~]$

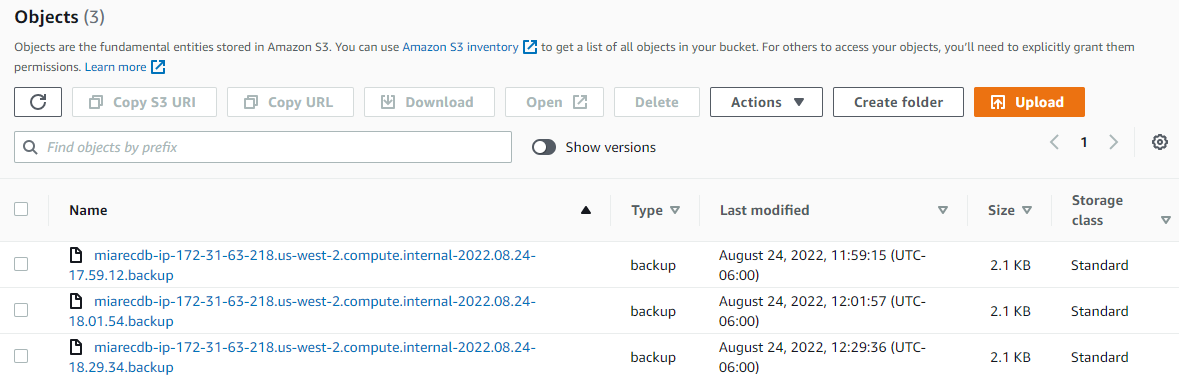

Navigate to Amazon AWS Console and check the presense of new backup files in the S3 bucket.